-

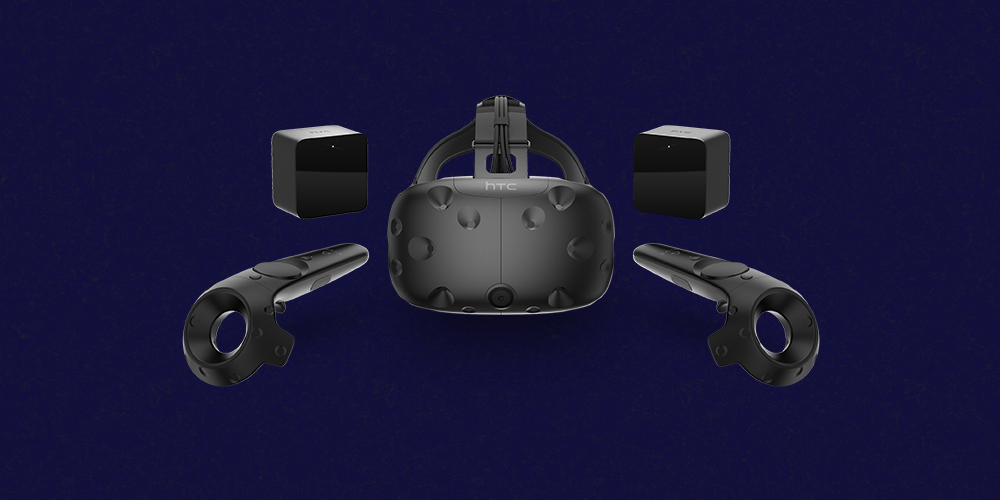

Experiences with the Valve/HTC Vive VR

This past week at The International I managed to get some time with the Valve/HTC virtual reality project, the Vive. I’ve never tried VR before, but tales of friends who have Oculus Rift developer kits and the general progress that the industry has made really got me interested. The Vive is a combination of a…